Where I get my papers from

I made a list of all the journals and conferences that have been featured in my paper summaries on this blog.

I made a list of all the journals and conferences that have been featured in my paper summaries on this blog.

This week’s paper about citation farming is right at the intersection of two of my “favourite” subjects: AI slop and academic fraud.

Good product security is possible only when developers and security experts work together, but this turns out to be hard in practice.

It’s surprisingly hard to figure out how you can create and run a new Laravel app via Docker without installing PHP first.

This is a short blog post about a short Perl script that I wrote to execute commands in running Docker Compose containers.

The five most popular JS frameworks – Angular, React, Vue, Svelte and Blazor – use different rendering strategies, and it shows.

Did you know you can make your Docker web app containers available via simple names instead of hard-to-remember port numbers?

Wildcard certificates are amazingly easy to set up and manage with cert-manager. I don’t know how I managed without them for so long.

Have you ever accidentally committed a secret to a Git repository? It’s a simple mistake that can be hard to fix, but it’s also easy to prevent.

Browsing, like public transport, takes you from somewhere you’re not to somewhere you don’t need to be – but it does bring you places.

I bought myself a replica of LEGO’s 2016 Brick Bank from a Chinese copycat brand and it went about as well as expected.

Fonts in macOS look different from text that’s been rendered in Windows, mostly due to different philosophies about font rendering.

The Eurovision Song Contest is technically not related to the European Union, but it does affect how the EU is perceived abroad.

This paper presents a handful of recommendations for designing effective guidelines to tackle gender biases in AI.

The Corporate Bullshit Receptivity Scale provides a way to measure how susceptible someone is to corporate bullshit.

Valse vrienden in het onderwijs

Valse vrienden in het onderwijsValse vrienden naaien je er altijd bij op een moment waarop het je niet uitkomt, dus je kunt maar beter goed voorbereid zijn…

Hoe je in en uit een trein stapt (zonder andere mensen boos te maken)

Hoe je in en uit een trein stapt (zonder andere mensen boos te maken)Een trein brengt je comfortabel van A naar B – mits je erin én eruit kunt. Helaas gaat het daar nog vaak mis.

Waarom Informatiekunde eigenlijk net Friesland is

Waarom Informatiekunde eigenlijk net Friesland isEn andere domme vergelijkingen. Uit de oude doos. Omdat ik weer geen tijd heb om iets nieuws te schrijven.

Chinese names are easier than you think, but at the same time they are also more complicated than you think.

China is one of the world’s most populous countries, which means it also has some of the largest cities on the planet.

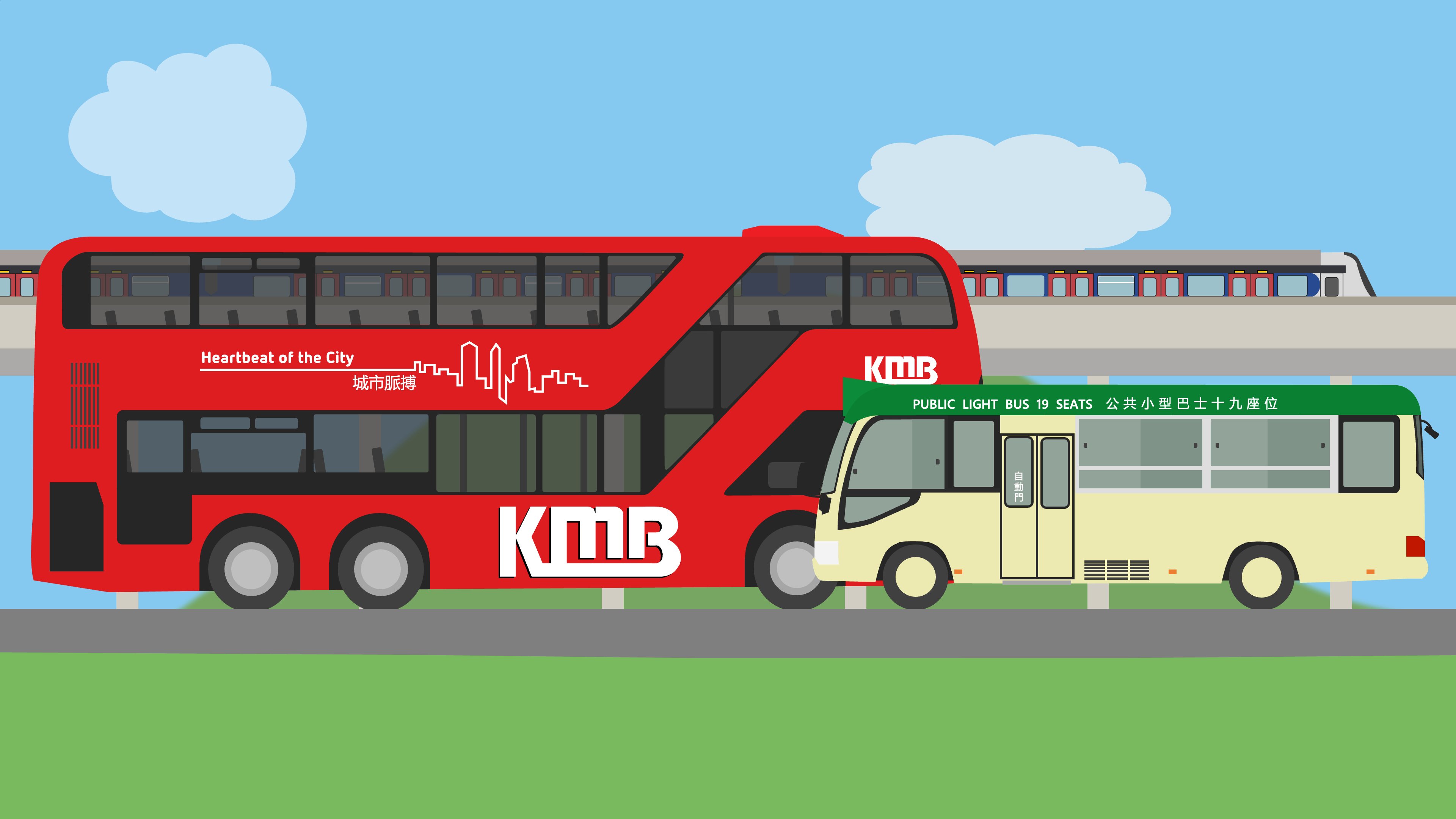

Depending on where you’re from, public transport in Hong Kong either offers a glimpse of the future or is stuck in the past.

I played Assassin’s Creed II in preparation for my trip to Florence this year and it was almost as good as Florence itself.

Oblivion Remaster is the same janky game as the 2006 original, but with a fresh coat of paint and some welcome gameplay improvements.

Caesar III remains the same game it was three decades ago, but the world — and its expectations — have changed.

The Pixel 10 Pro is supposed to be Google’s flagship phone, but it certainly doesn’t feel like one.

The Apple Magic Keyboard is an outrageously expensive wireless Mac keyboard with just one redeeming feature.

There is no right answer to that question, but having used both for quite some years, I can share what I like and dislike about each.

For reasons that are no longer clear to me, I tried to name each of my computers after a space shuttle (and gave up after 22 years).

I tried donating to OSS creators via GitHub Sponsors and learned some *mildly* interesting things.

Steve Jobs once said that “great artists ship”. That’s how I know I’m not a great artist – I haven’t shipped anything of note this year.

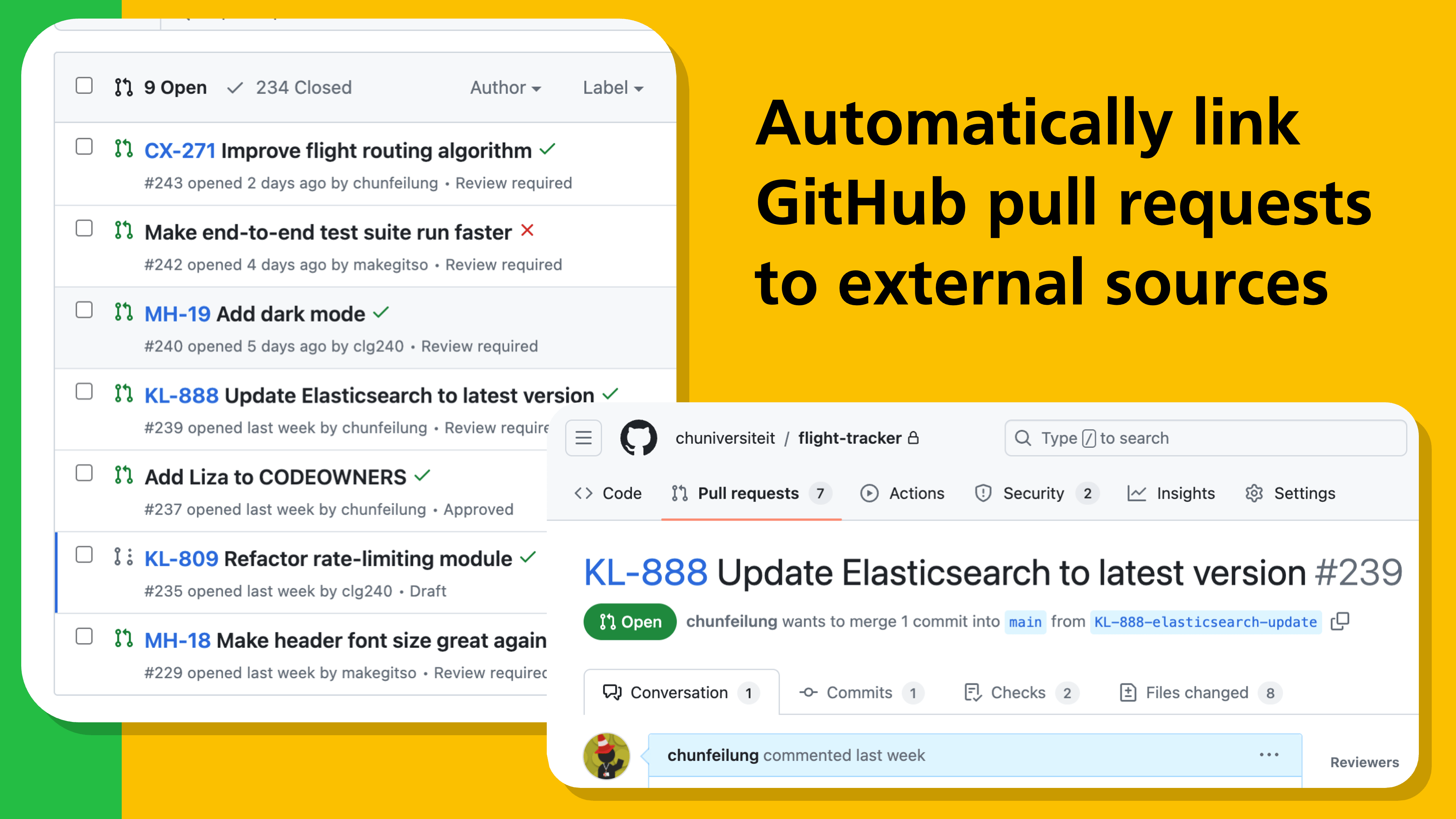

Alright is a browser extension that automatically turns JIRA (and other) references in GitHub pull request titles into hyperlinks.

For my first robotics project, I needed to find a way for students in Germany to control a robotic arm in the Netherlands using ROS.

I built a tool for the handful of people who listen to NPO radio stations, use Last.fm and know how to use the command line.